DS4 Inference Runtime: Expert Analysis

- DS4 is a small LLM inference runtime that can run DeepSeek 4.

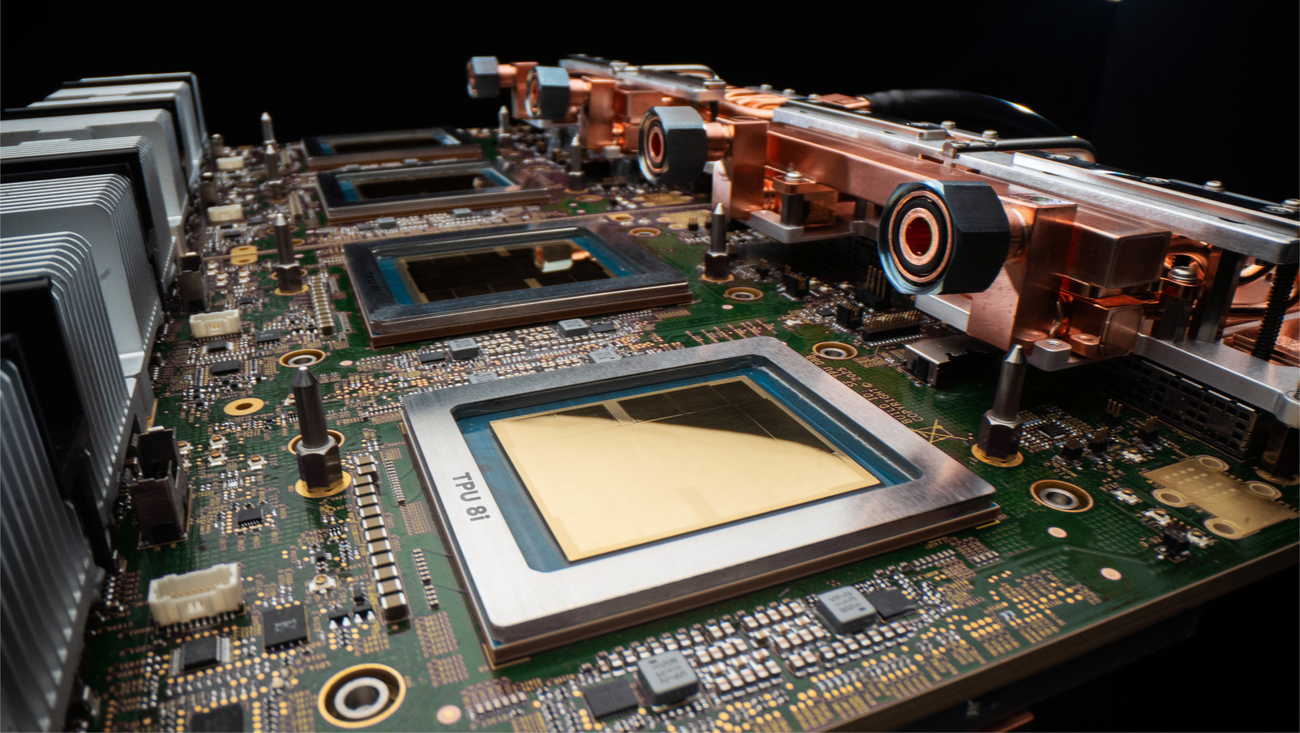

- It currently requires 96GB of VRAM to operate.

- Future growth and performance are uncertain.

The Buzz Score

The Internet’s Verdict: 70% Hyped, 30% Skeptical

Forum Voices

Users are discussing the potential of DS4. One user notes:

DwarfStar4 is a small LLM inference runtime that can run DeepSeek 4. The blog post implies that it currently requires 96GB of VRAM.

Another user is concerned about future performance:

With ‘intelligence’ (or whatever you want to call it) and speed both seeming to ramp up quickly with local models I wonder what the growth rate and ceiling(?) might be in this space.

Technical Details

DS4 supports multiple backends, including Metal and NVIDIA CUDA.

Focus Keyword: DS4 Runtime